|

In this blog post, I would like to discuss entropy maximization and a couple of maximum entropy distributions.

For example if you have only two letters A and B.

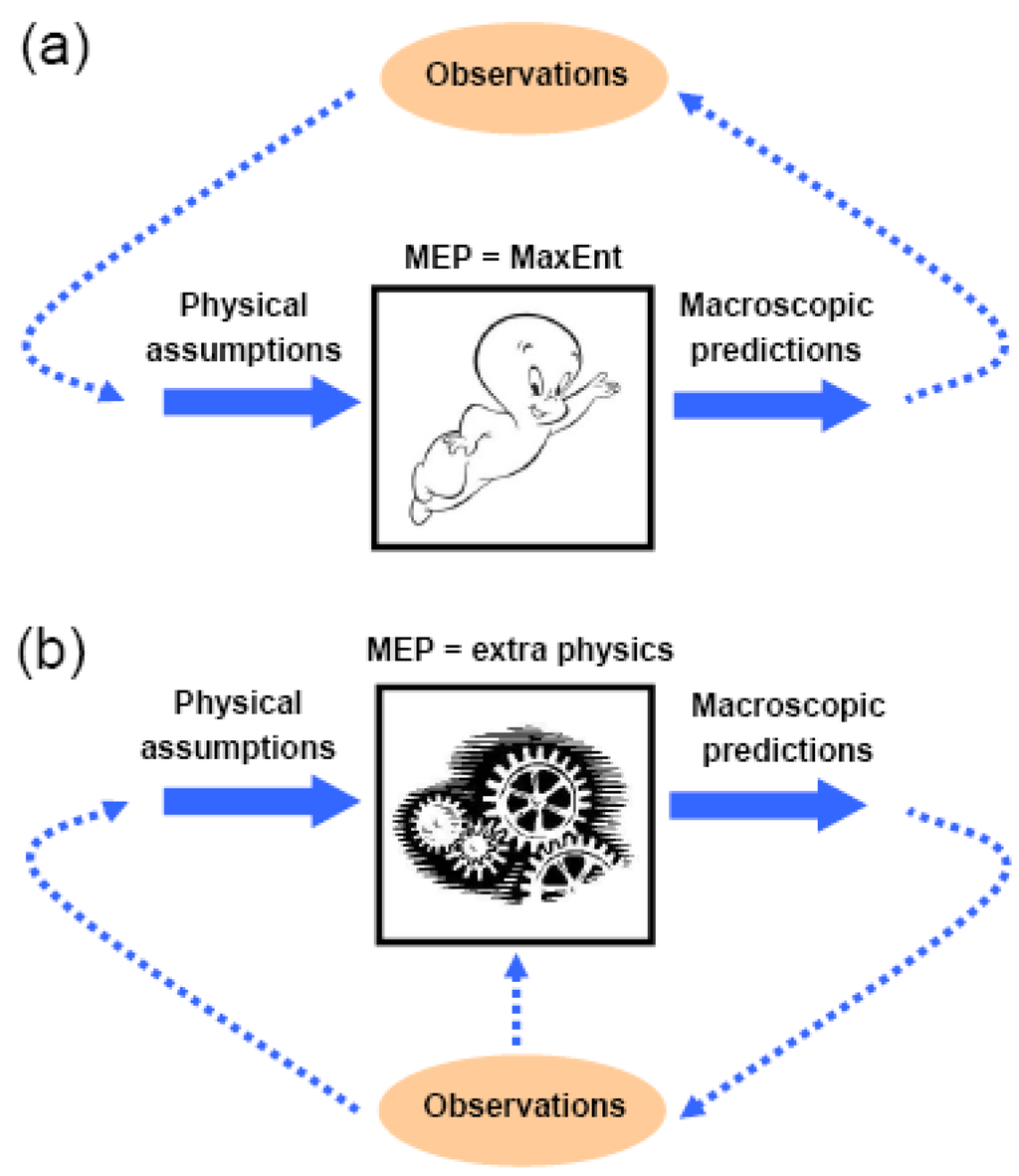

Given the second law of thermodynamics (principle of increase of entropy), isolated systems spontaneously evolve towards thermodynamic equilibrium, the state with maximum entropy, maximum entropy distributions become the most natural distributions under certain constrains. It is the densest amount of information you can get across given a fixed set of letters. (prior information) about a distribution P, we consider all probability distributions satisfying. 4 Maximum Entropy Modeling Overview Building ME Models Application to NLP 5 References. These prior data serves as the constrains to the probability distribution. The maximum entropy principle is this: given some constraints.

The principle of maximum entropy states that the probability distribution which best represents the current state of knowledge is the one with largest entropy, in the context of precisely stated prior data (such as a proposition that expresses testable information). The principle of maximum entropy is a method for assigning values to probability distributions on the basis of partial information.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed